MindLogger ecological momentary assessments in the Healthy Brain Network

cross collaborative presentation

Jon Clucas

December 20, 2019

This presentation is intended to give a state-of-the-project at the end of 2019 for MindLogger, particularly as it applies to using the platform for ecological momentary assessments.

I recently accepted a promotion to an associate software developer role in the Computational Neuroimaging Lab, so this presentation is also intended to give a brief introduction to the nuances of the project and provide an opportunity for potential collaborators to ask questions.

If you want to play along, mindlogger.org includes links to download the mobile app for both Android and iOS. The top right corner of this slideshow includes a QR code to our developer server API endpoint; new users on that server are currently enrolled in an applet of the Healthy Brain Network EMA protocol described in this presentation.

An ecological momentary assessment (EMA) is

- ecological in the sense of occurring in the embodied situational context of the subject being assessed at the time of assessment (ie, in a natural rather than experimentally designed physical and social context),

- momentary in the sense of happening in the temporal context at which the subject is sampled (ie, at prompted times), and

- an assessment in the sense that one records data that can be analyzed and interpreted, especially longitudinally and between subjects.

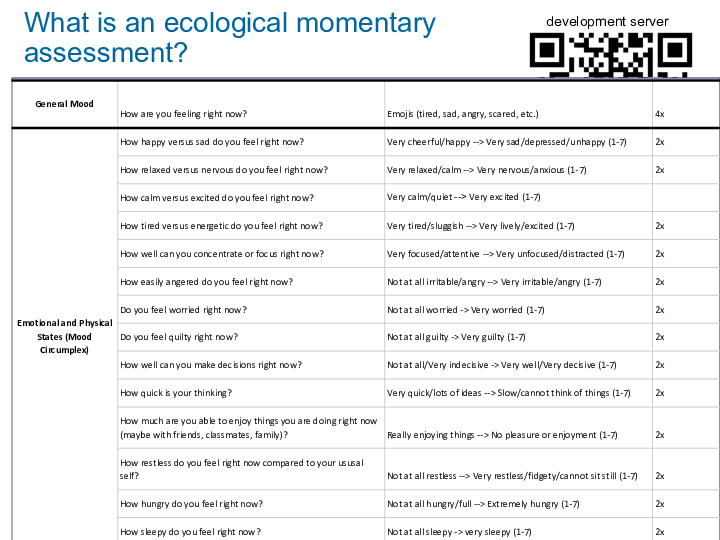

This slide shows a sample of the questions included in the EMA we are using with Healthy Brain Network participants. For this iteration of MindLogger, we are only using self-report questions, but eventually we intend to support multiple-informant protocols.

The problem motivating the development of MindLogger is the fact that gathering reliable, interoperable, contextual psychometric data is challenging. This image is a photograph of two open drawers full of a fraction of the assessment materials currently in use in our clinic. The vision of MindLogger is to reduce the friction in distributing such assessments, affording subjects to perform the assessments at arbitrary or strategically contextual times, and facilitating sharing data across assessments.

MindLogger is a platform including

- an admin panel where applets can be configured from protocols,

- a web application and mobile appliactions for both Android and iOS, where users can perform activities in their applets,

- a RESTful API which allows MindLogger’s apps and other interfaces to get data in and out of a server, and

- reproschema, a schema that affords interoperability between protocols within MindLogger and between MindLogger and other platforms.

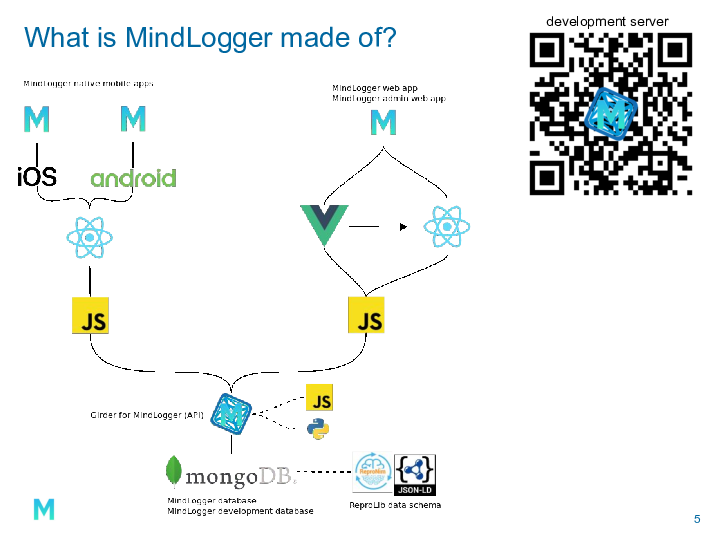

This branching architecture diagram shows the core technologies in use in MindLogger’s current solution stack.

At the top, we have the three applications, with mobile on the left and web on the right. Across the two mobile apps, we have feature parity, but between mobile and web, we do not.

The mobile apps are coded primarily in React Native, a JavaScript framework. The web app and admin panel (which is also a web application) are coded in Vue and React, two other JavaScript frameworks.

All of the applications (mobile, web, and admin panel) retrieve data from and submit data through an API on a server running Girder for MindLogger, a customized implementation of Kitware’s Girder data management platform.

Girder for MindLogger allows access-controlled interactions with our MongoDB database, which we host in the cloud via MongoDB Atlas.

The data in our database are stored in BSON in reproschema syntax. Reproschema is itself defined in JSON-LD, an RDF serialization in JSON. All that is to say the data are stored in binary in well-defined interoperable semantic JavaScript Objects and Arrays.

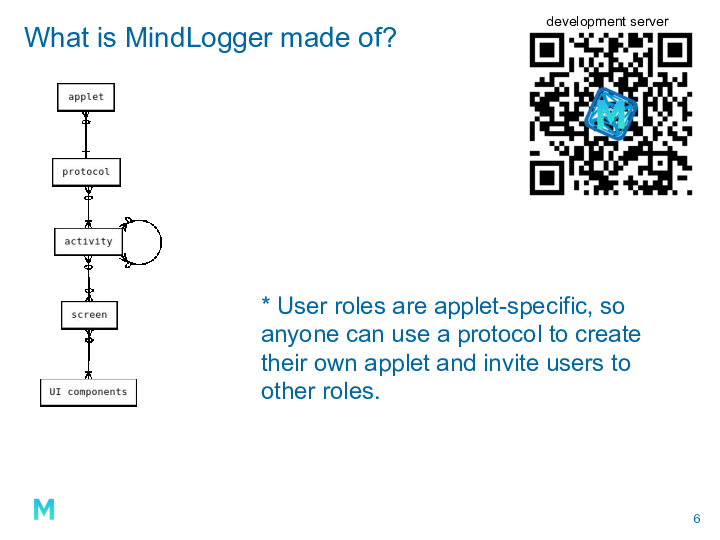

The primary level of shareable assessments in MindLogger is the protocol, which is defined in reproschema syntax.

An applet is created from exactly one protocol and adds user roles and customization, eg, scheduling.

A protocol is composed of one or more activities.

An activity is composed of any number of screens and other (sub)activities.

A screen is composed of one or more user interface components, eg, stimuli or user input fields.

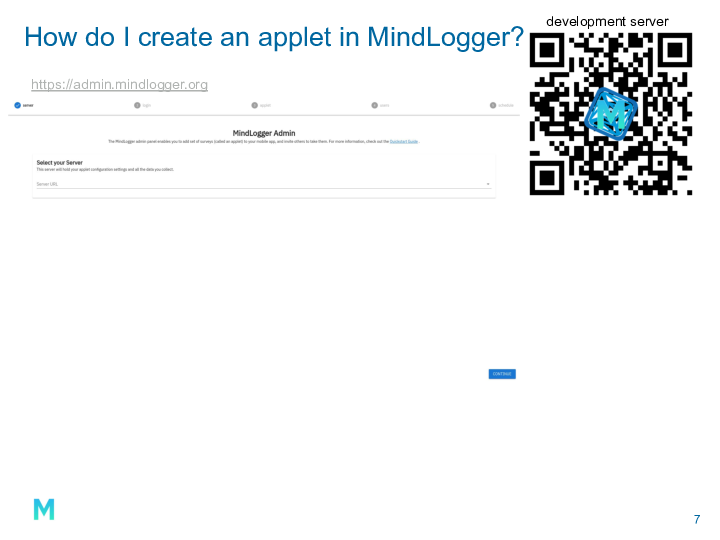

The MindLogger admin panel allows anyone to

- choose a server (production, development, localhost or custom),

- log in to that server,

- creating an applet by clicking the plus in the bottom right of the screen and

- loading a protocol from a web-accessible URL (reproschema hosts many in their GitHub repository) or

- launching the protocol builder,

- manage user roles for that newly-created applet (or a user-selected applet on which the logged-in user has coordinator privileges), and

- set schedules for activities in that applet.

If you were an early tester of MindLogger, you’ll remember needing a special link or an invitation to get the latest build. Now the test versions are managed through Apple TestFlight and Google Play Store, so anyone can directly download the latest mobile app and try for themselves. Updates are now available through TestFlight and Play Store as well.

For limited uses, MindLogger is finally working well, but much more needs to be done before the platform will be robust. The five points on this slide are the areas that I consider the weakest as of now.

Q & A

Is it possible to customize the assessment schedule so that participants can do assessments multiple times per day?

Yes! Schedules can be set via the calendar widget in the admin panel or by manually writing or updating the JSON and updating through the API.